description:

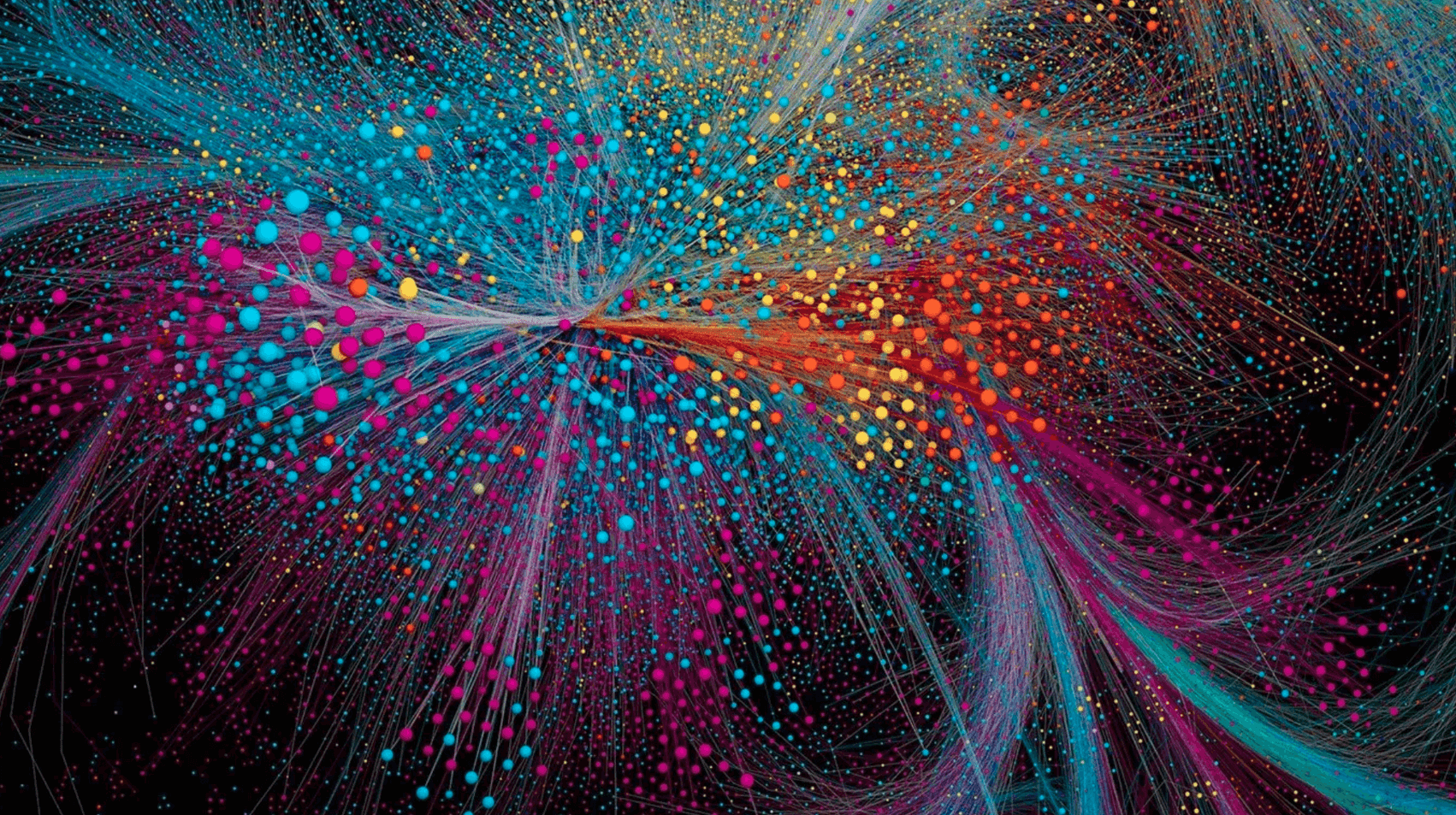

The science of information is the most influential, yet perhaps least appreciated field in science today. Never before in history have we been able to acquire, record, communicate, and use information in so many different forms. Never before have we had access to such vast quantities of data of every kind. This revolution goes far beyond the limitless content that fills our lives, because information also underlies our understanding of ourselves, the natural world, and the universe. It is the key that unites fields as different as linguistics, cryptography, neuroscience, genetics, economics, and quantum mechanics. And the fact that information bears no necessary connection to meaning makes it a profound puzzle that people with a passion for philosophy have pondered for centuries.